The Dirty Code & Skyknock Ft Bettina ã€å’nano Me Again Arikadou Remixã£â‚¬â Overwatch Remix

Why I Write Dirty Lawmaking: Lawmaking Quality in Context

by Adam Tornhill, October 2019

I used to obsess about lawmaking quality before in my career. My experience indicates that I'm in good visitor here; a lot of us developers spend so much time growing our programming skills, and that kind of drive comes from intrinsic motivation. We care about what we do, and we want to do it well.

However, over the past decade I take noticed a alter in how I approach code quality. I still consider code quality important, simply but in context. In this commodity I want to share the heuristics I use to decide when high-quality code is chosen for, and when nosotros tin can let information technology slip. Information technology's all virtually using data to guide when and where to invest in higher code quality, and how it can be a long-term saving to compromise it. Let'south kickoff by uncovering the motivation for investing in code quality.

Lawmaking quality only matters in context

Code quality is a broad and sick-defined concept. To me, code quality is all well-nigh understandability. The reason being that lawmaking is read exceedingly more often than information technology'south modified. In fact, well-nigh of our development time is spent trying to understand existing code only so that we know how to change it. We developers don't actually write code. Our primary task is to read lawmaking, and the easier our code is to reason most, the cheaper it is to modify.

Now, let's pretend for a moment that we know that a particular piece of code will never ever exist modified again. Would that change how you write that code? It should, at to the lowest degree in a globe where business organisation factors like fourth dimension to market matter. So what would we do differently in this hypothetical scenario? Well, code comments would be the first affair to go -- the automobile doesn't intendance about them anyway, and since the code won't ever be touched again, comments don't serve any real need. The same goes for design principles like loose coupling, high cohesion, and Dry -- none of those matter unless nosotros have to revisit the lawmaking once again.

Of grade, quick and dirty code is all fun and games until we demand to revisit it, sympathize it, and modify information technology. That's where the costs come. These delayed costs may be much higher than what it would take required to design the code properly in the first place, simply considering nosotros need to re-larn a part of the solution domain that is no longer fresh in our head. Since nosotros cannot know upward front if our code will be modified again or not, we larn to err on the safe side; meliorate to brand all code as clean as possible. That manner we avert unpleasant time to come surprises.

With that reservation, I volition claim that doing a quick and dingy solution is almost always a faster short-term solution (before you burn the heretic, please notation that I emphasized short-term). But could it also be a feasible long-term saving? Tin can we trade quality for speed in the long run as well? It turns out that nosotros tin can in certain situations.

When I reason most lawmaking, I'chiliad not only looking at the code. I have just as much involvement in agreement its temporal characteristics and trends, which is information we can mine from version-control history. The key concept I utilise for code quality decisions is hotspots.

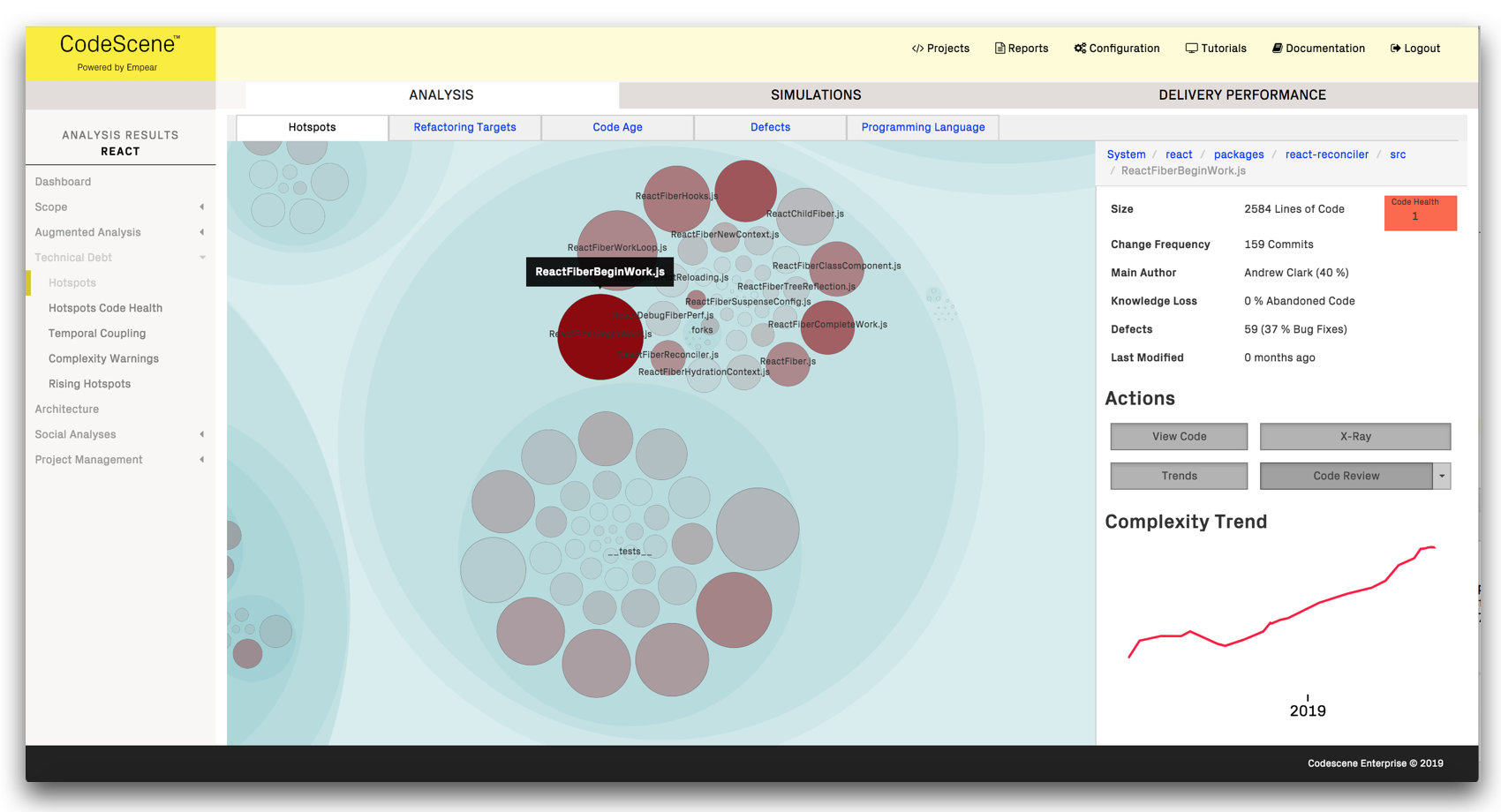

A hotspot is lawmaking with high evolution activity and frequent churn. It's code that'southward worked on often, and hence code that has to be read and understood frequently, potentially by multiple authors. Here's an instance from React.js, and a link to the interactive hotspot map:

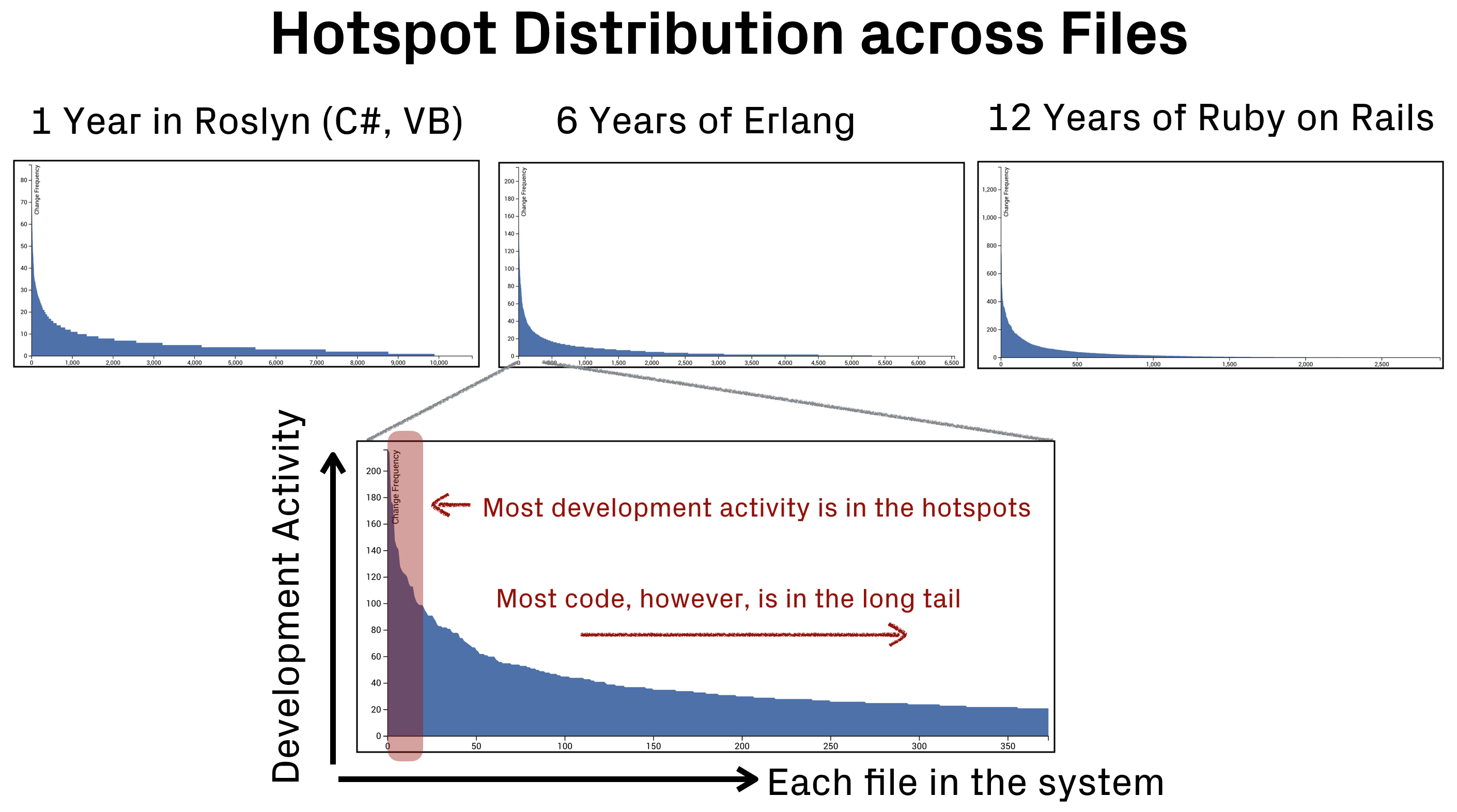

The interesting thing with hotspots is that they make upwards a relatively small-scale role of the code, oft merely 1-3% of the total code size. However, that small portion of the code tends to attract an unproportional corporeality of evolution activity. This becomes apparent if we plot the alter frequency of each file in a codebase:

Every bit you see in the preceding figure, most of your code is likely to exist in the long tail. That ways it's lawmaking that'southward rarely if e'er touched. The hotspots, on the other manus, only make up a modest part of the code but attract most of the development work.

This implies that any lawmaking quality bug or technical debt in the hotspots, even so minor, are likely to be expensive. Really expensive. Since the lawmaking is worked on so often, the additional costs in understanding the lawmaking and in making sure it doesn't interruption, multiply quickly. This is where code quality matters the nearly.

I have used hotspot analyses in my daily work for the past x years. It's been a real game changer that I'm taking full advantage of. Here'south how: occasionally, I demand to aggrandize or tweak a feature that's been stable for a long time, so I find myself operating in the long tail of change frequencies. To me, that'south an opportunity to make the bet that the lawmaking will continue to remain stable. You lot come across, lawmaking frequently turn into hotspots because it implements a particularly volatile area of the domain where requirements are evolving. Stable code unremarkably represent stable parts of the domain also. That allows me to take brusk cuts.

For example, when working on code in the long tail I might realize that my new code is similar to some existing responsibility. So I copy-paste the original and tweak the copy to do what I want. Or I decide to add one more parameter to a role instead of looking for a concept to encapsulate. Or maybe I decide to squeeze in an actress if-statement in already tricky code. Yes, there's truly no limit to the sins I commit. Merely I but compromise quality in the long tail, and I only do information technology if I estimate that it volition save me time.

On the other hand, once I work in hotspots, I'm well aware that the code will be worked on again; the best prediction of future activeness is the code'southward history. Then I take care in designing the code, and I oft start by refactoring the existing solution to get a better starting point. Not only will this brand life simpler for my colleagues; my future cocky is going to love me also.

Take calculated risks

Before yous walk into your managing director's office to claim that this crazy Swedish programmer claims that we can write crappy lawmaking and benefit from it

, I think it's but fair to signal out that I do have some rules to control the risk. It has become sort of an informal procedure and safety-cyberspace that guides me in my day job.

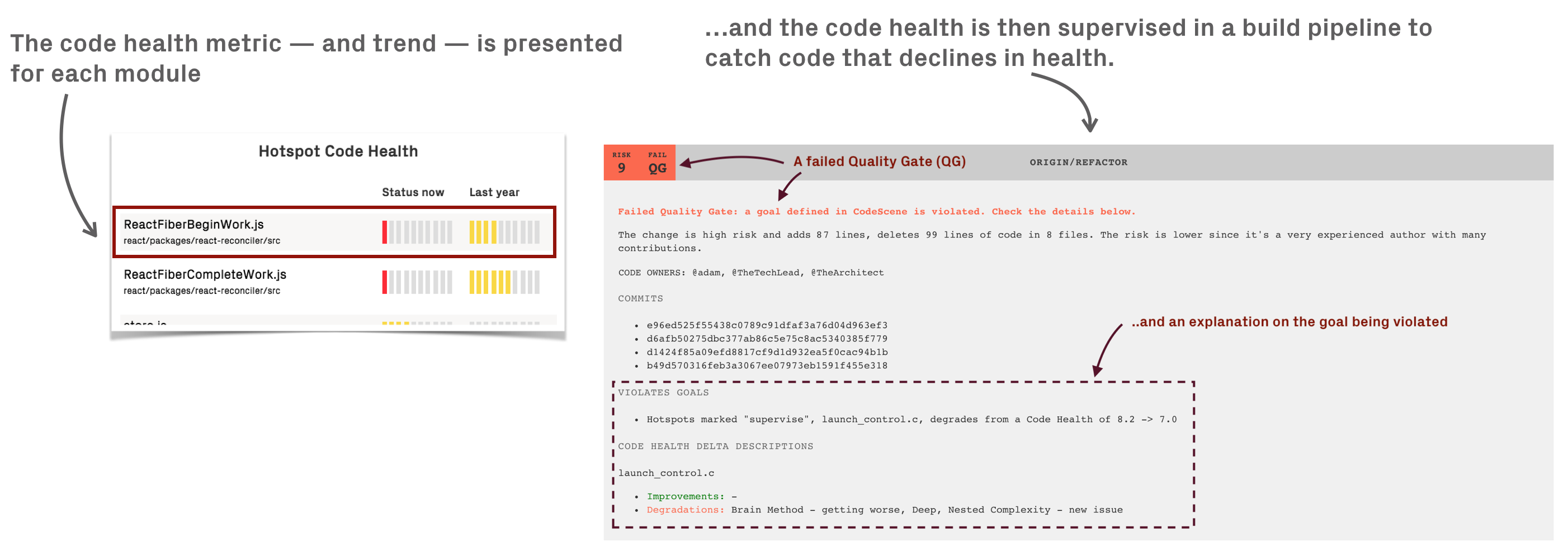

First of all, I know exactly how healthy my code is. I know the code health of each module in the codebase where I piece of work thank you to CodeScene. CodeScene's Code Health metric was heavily researched and designed precisely for estimating how hard code would be for a human being to understand. The code health metric doesn't care about the subjective stuff, like coding style or if a public constructor should lack a comment, merely instead aims to take hold of the properties of code that really matter for maintainability.

Knowing the health of your code -- at any time -- is fundamental. One time you have that cognition, you can starting time to apply technical debt in its original sense. That is, you lot can take on technical debt strategically. And if you balance the amount of debt you have on based on the hotspot criteria, then information technology might even become an interest gratuitous loan. There might be a gratis lunch after all, it just requires information.

When using the lawmaking wellness metric, I also tend to emphasize trends over accented values. I utilise this to put a quality bar on any code that I touch. Should some code slide down and decline in wellness, then that might be a sign I've gone too far and need to pay down the accumulated debt. To make it actionable, I run these checks in a CI/CD pipeline (you can read more on how this works on my other blog).

Finally, I tend to write a test for most new lawmaking. When operating in the long tail, I might not put the total effort into making the lawmaking easily testable, but I practice like to leave a test as a safety-net for my time to come self.

The perils of improving existing code

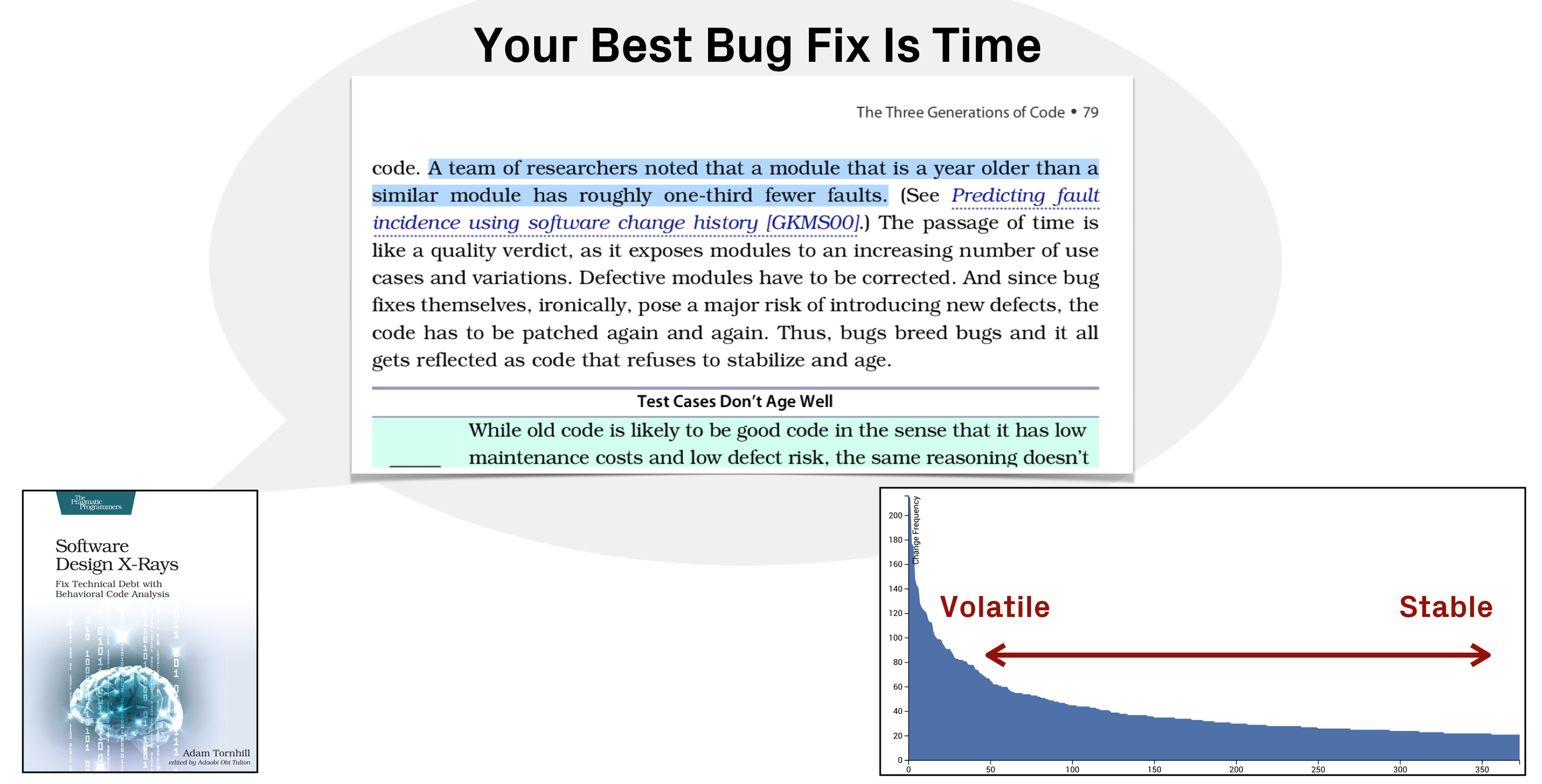

So far I've talked virtually the economic science of knowing when -- and when non -- to invest in code quality. But in that location's a different perspective also, and it'southward an argument virtually correctness. You see, make clean code that doesn't piece of work is rarely rewarded. And this is where it gets interesting. It turns out that there's a correlation between old, stable lawmaking and correctness.

I covered this in Software Design X-Rays under the title Your Best Bug Fix Is Fourth dimension

. In that department, I reference research that compares modules of different age. That is, the fourth dimension since their last modification. That inquiry finds that a module which is a year older than a similar module has roughly one-third fewer defects.

Lawmaking stabilizes for unlike reasons. One might be that it'south expressionless code, which means it can exist safely deleted (always a win). Or it might get stable because it just works and does its chore. Certain,the code may notwithstanding be hard to sympathise, but what is in that location is probable to have been debugged into functionality. Information technology's been boxing tested and it just works. Using hotspots, you can ensure it stays that mode.

This definiteness perspective is the main reason why I would like to put the Boy Scout Dominion into context too; should we actually be boy scouting lawmaking in the long tail? Code that we haven't worked on in a long fourth dimension, and code that nosotros might not touch again in the foreseeable futurity. I would at least residue the trade-offs betwixt stabilizing code and refactoring information technology, and I tend to value stability higher. But once again, information technology's a contextual word that cannot -- and shouldn't -- be taken equally a general rule applicable to all code. All code isn't equal.

Code quality as a lever

I rarely use clean code

as a term, simply considering it suggests an accented that I don't recollect be, and the opposite -- dirty code -- is past definition unattractive. That said, I exercise appreciate much of the work by the clean code movement, I follow it closely, and I larn from it. I do call up that the movement has pushed our manufacture in a good direction.

My point is but that good

is contextual. And following a principle to the farthermost means a diminishing return; we spend fourth dimension polishing code with footling pay off, and that'south fourth dimension we could have been spent on activities that actually benefit our business. By making contextual decisions, guided by data from how our code evolves, we tin can optimize our efforts and view lawmaking quality every bit a blueprint option that we might or might not need in specific situations. Information technology all depends.

Near Adam Tornhill

Adam Tornhill is a programmer who combines degrees in engineering and psychology. He's the founder of Empear where he designs the CodeScene tool for software analysis. He's also the author of Software Blueprint X-Rays: Fix Technical Debt with Behavioral Code Assay, the best selling Your Code as a Offense Scene, Lisp for the Spider web, Patterns in C and a public speaker. Adam'southward other interests include modern history, music, and martial arts.

mcdonaldshoul1964.blogspot.com

Source: https://www.adamtornhill.com/articles/code-quality-in-context/why-i-write-dirty-code.html

Post a Comment for "The Dirty Code & Skyknock Ft Bettina ã€å’nano Me Again Arikadou Remixã£â‚¬â Overwatch Remix"